What is ThinKiosk?

A Windows-based, software-defined, scalable PC to thin client conversion solution that delivers a unified end-user experience on all converted endpoints.

Play Video

Secure thin clients for unified endpoint management

ThinKiosk installs on any device operating with Windows OS. It creates a secure, isolated shell that leverages Windows, but crucially blocks user-access to the underlying OS.

IT teams can then configure and enforce a strict security policy on each device, as well as push patches and updates for applications, firewalls, firmware, etc.

Key features

Locks down endpoints, restricting OS access

Role-based admin for strict access controls

Unified endpoint management at scale

Customizable workspace UI & branding

Software-defined: no device purchase or OS wipe needed

Simplify day-to-day IT tasks to reduce workload

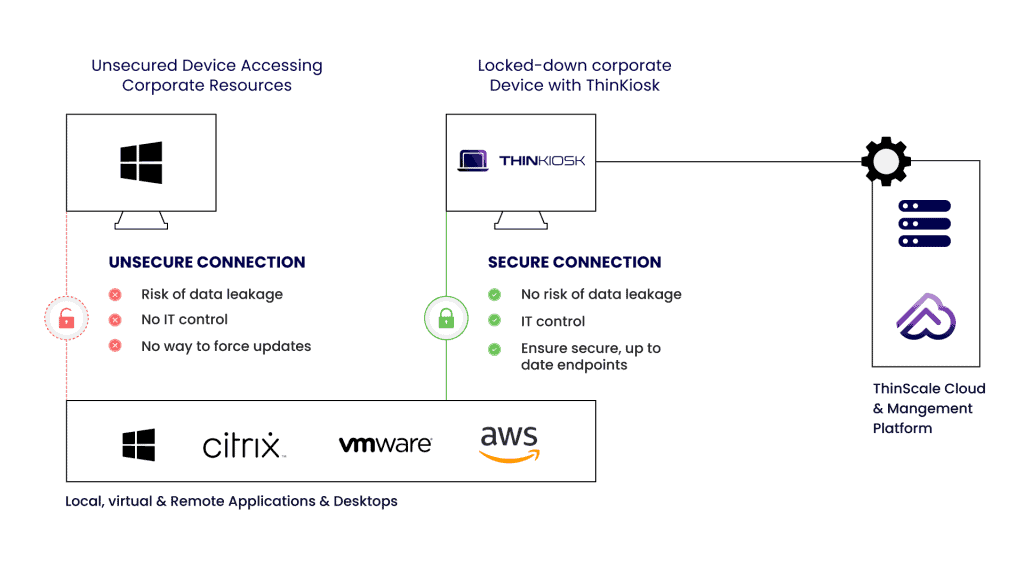

How does ThinKiosk work?

ThinScale’s ThinKiosk installs on any supported Windows operating system and creates a new, isolated secure shell that blocks the underlying OS.

ThinKiosk enforces its own security policy (configurable by your IT team) and ensures that the devices are secure and compliant.

Anti-malware features, data leakage prevention, and authenticator integration align with best practices for Data Loss Prevention (DLP).

ThinScale provides a unified view of each endpoint, with the ability to control, update and perform in-depth audits.

All data created during the secure session is saved to a hidden, bit-locker encrypted temporary drive that cannot be read by unapproved processes.

External USB storage devices can be blocked without stopping the use of other USB devices such as headphones or keyboards.

“It used to take an hour and a half to configure a thin client with HP, but with ThinKiosk it takes about 2 minutes, even if you have the write filter enabled”.

Frank Slomp

Systems Administrator, City Council of Steenwijkerland, NL

Product architecture

Continue your research

Compliance reports

All ThinScale solutions are regularly pen-tested and help maintain compliance with PCI DSS, HIPAA, and GDPR. Read our compliance reports from Coalfire.

Product information

Want to learn more about the product? Read our technical datasheet to understand the key features and benefits in more detail.